Docker

Web scraping has become increasingly popular in recent years, as businesses try to stay competitive and relevant in the ever-changing...

Event-driven architecture is the basis of what most modern applications follow. When an event happens, some other event occurs. In the...

If you’re a developer hunting for Docker and Kubernetes-related resources, then you have finally arrived at the right place. Docker is a...

Raspberry Pi OS (previously called Raspbian) is an official operating system for all models of the Raspberry Pi. We will be...

CSV refers to “comma-separated values”. A CSV file is a simple text file in which data record is separated by commas or...

MongoDB is a popular document-oriented database used to store and process data in the form of documents. In my last blog post,...

Customer churn is a million-dollar problem for businesses today. The SaaS market is becoming increasingly saturated, and customers can choose from...

Learn how Docker Desktop and CLI both manages Linux containers and Wasm containers side by side.

This post was originally posted by the author on Docker DEVCommunity site. Community is at the heart of what Docker does....

It is a tool that will help read your environment configuration from your git repository and apply it to your Kubernetes...

Learn how to scale DevOps to the Edge

Download Docker Desktop for Linux

Access to Docker Developer Tools

Join Collabnix Community Slack

Listing the container Switching to the new context Listing the overall context

If you’re a developer looking out for a lightweight but robust framework that can help you in web development with fewer...

React is a JavaScript library for building user interfaces. It makes it painless to create interactive UIs. You can design...

Today, it’s hard to find any software built from the scratch. Most of the application built today uses the combination of...

Docker: Unleashing the Digital Titan of the Tech World

By utilizing DockerLabs, you can acquire knowledge on Docker and gain entry to over 300 tutorials on Docker designed for novices,...

Today organizations are experiencing the shortage of software developers and it is expected to hit businesses hard in 2022. Skilled developers...

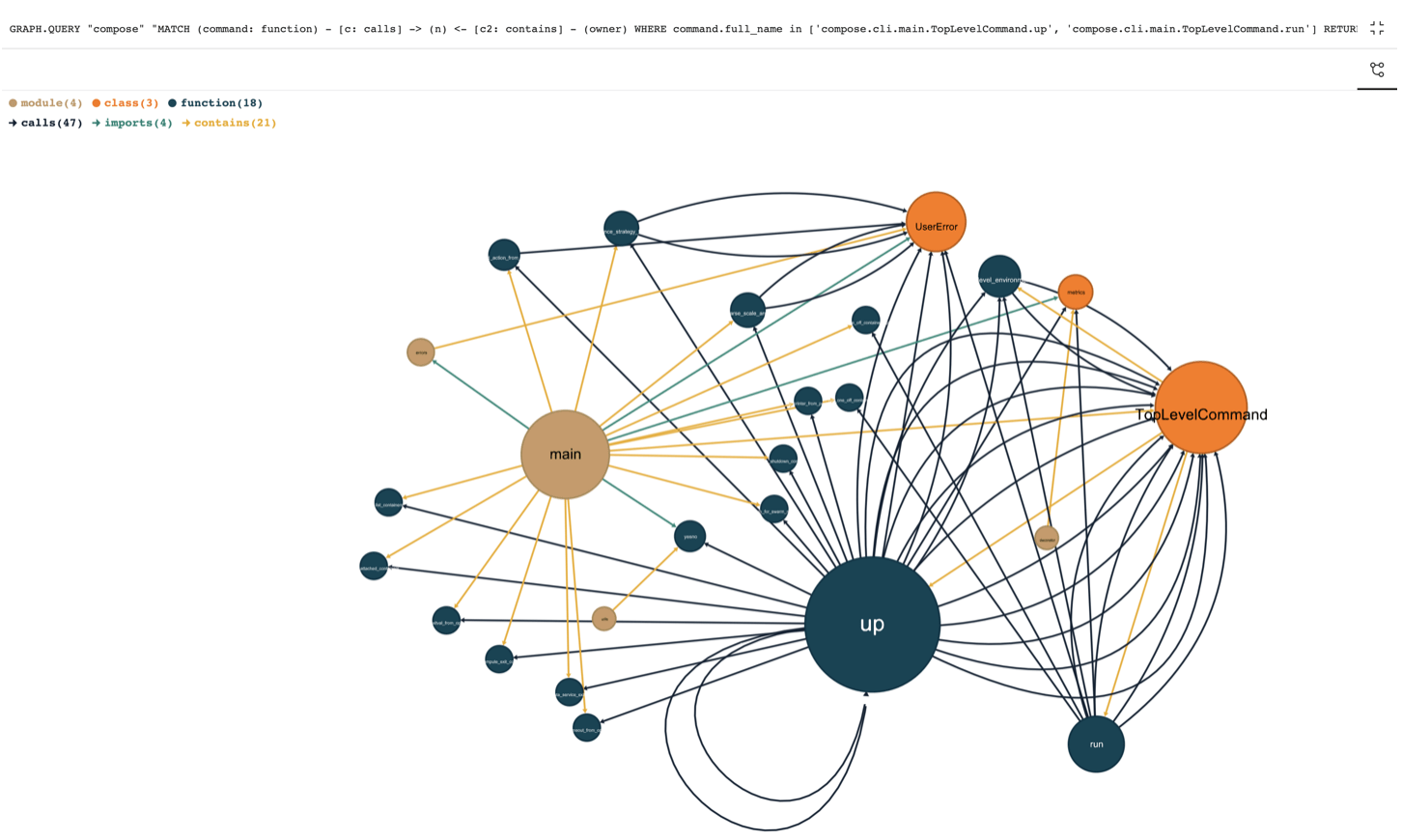

With over 14,700 stars, 2,000 forks, awesome-compose is a popular Docker repository that contains a curated list of Docker Compose samples. It helps...

The Raspberry Pi is powerful computer despite its size. This portable device comes with a CPU based on the Advanced RISC...

DevOps is a way for development and operations teams to work together collaboratively. It is basically a cultural change. Organizations adopt...

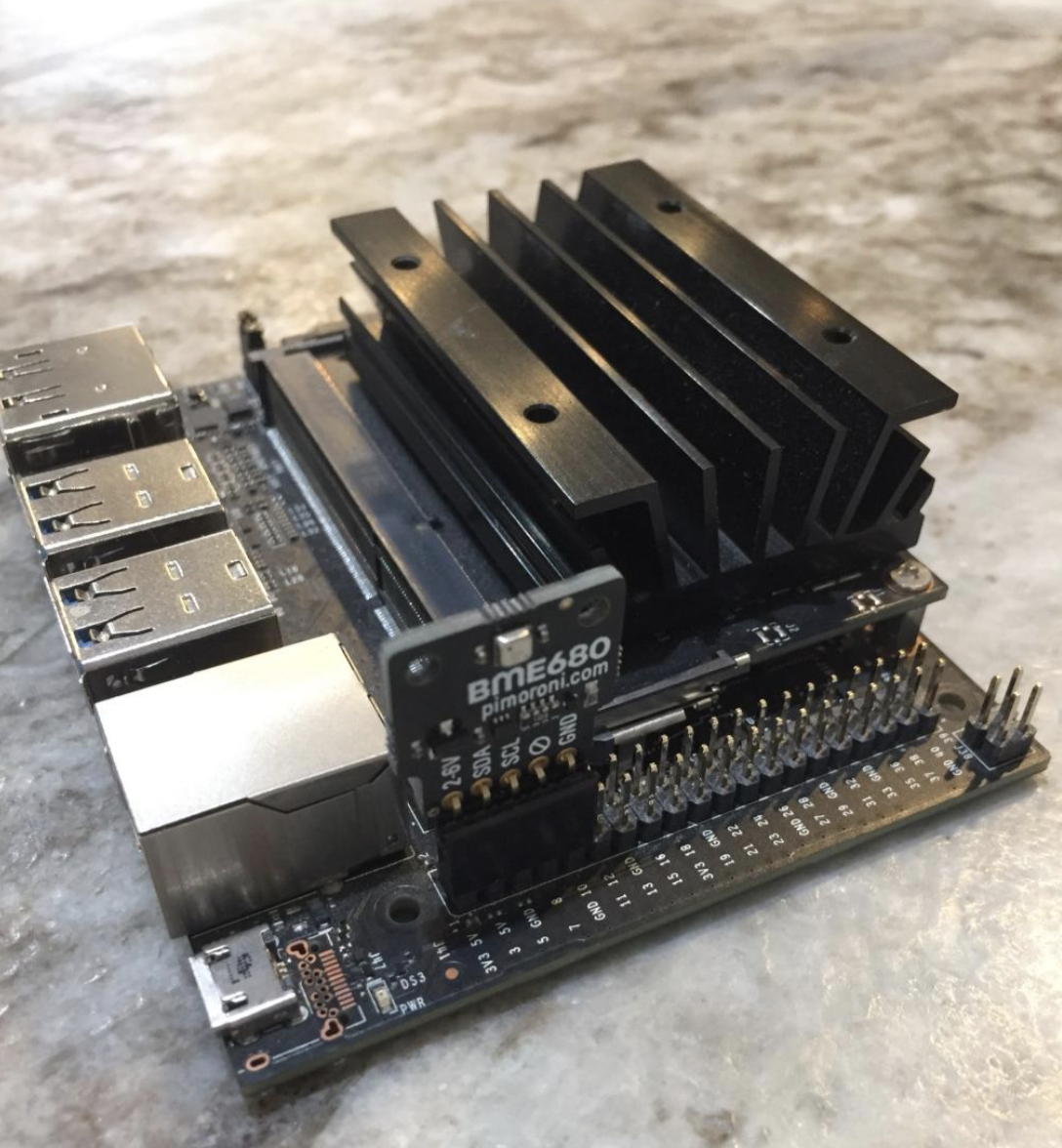

The BME680 is a digital 4-in-1 sensor with gas, humidity, pressure, and temperature measurement based on proven sensing principles. The state-of-the-art...

Booting from a SD card is a traditional way of running OS on NVIDIA Jetson Nano 2GB model and it works...

Redis stands for REmote DIctionary Server. It is an open source, fast NoSQL database written in ANSI C and optimized for speed. Redis is an...

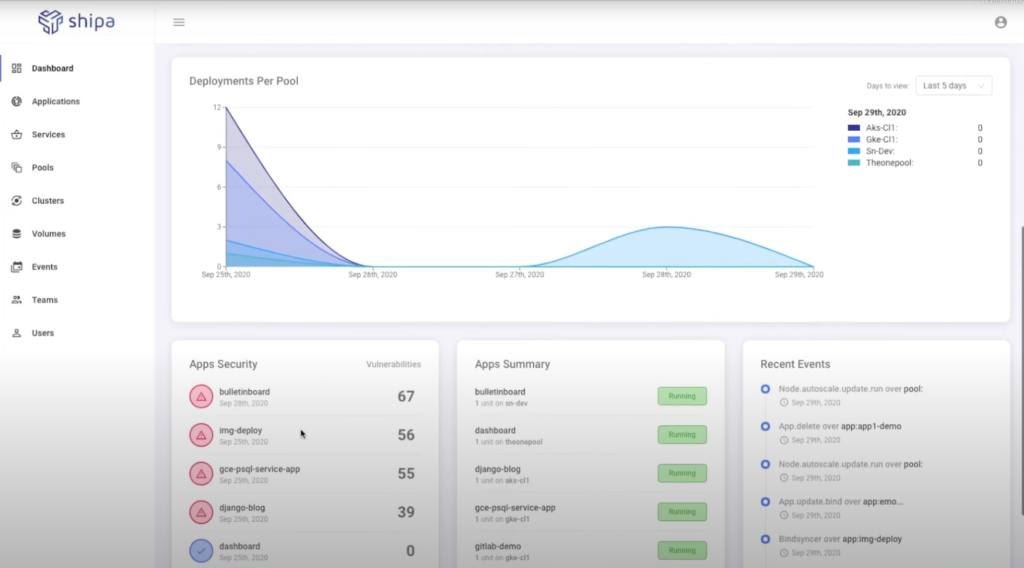

As the tech stacks are becoming more and more complex and business is moving at a fast pace, DevOps Teams worldwide...

This tutorial walks you through building and running the sample Album Viewer application with Windows containers. The Album Viewer app is an ASP.NET...

Terraform infrastructure gets complex with every new deployment. The code helps you to manage the infrastructure uniformly. But as the organization grows,...

When it comes to running containers and using Kubernetes, it’s important to make security just as much of a priority as...

Today, every fast-growing business enterprise has to deploy new features of their app rapidly if they really want to survive...

According to a recent Gartner report, “By the end of 2024, 75% of organizations will shift from piloting to operationalizing artificial...

Terraform is an infrastructure as code (IaC) tool that allows you to build, change, and version infrastructure safely and efficiently. This includes low-level...

Discord is one of the fastest-growing voice, video and text communication services used by millions of people in the world. It...

In May 2021, over 80,000 developers participated in StackOverFlow Annual Developer survey. Python traded places with SQL to become the third...

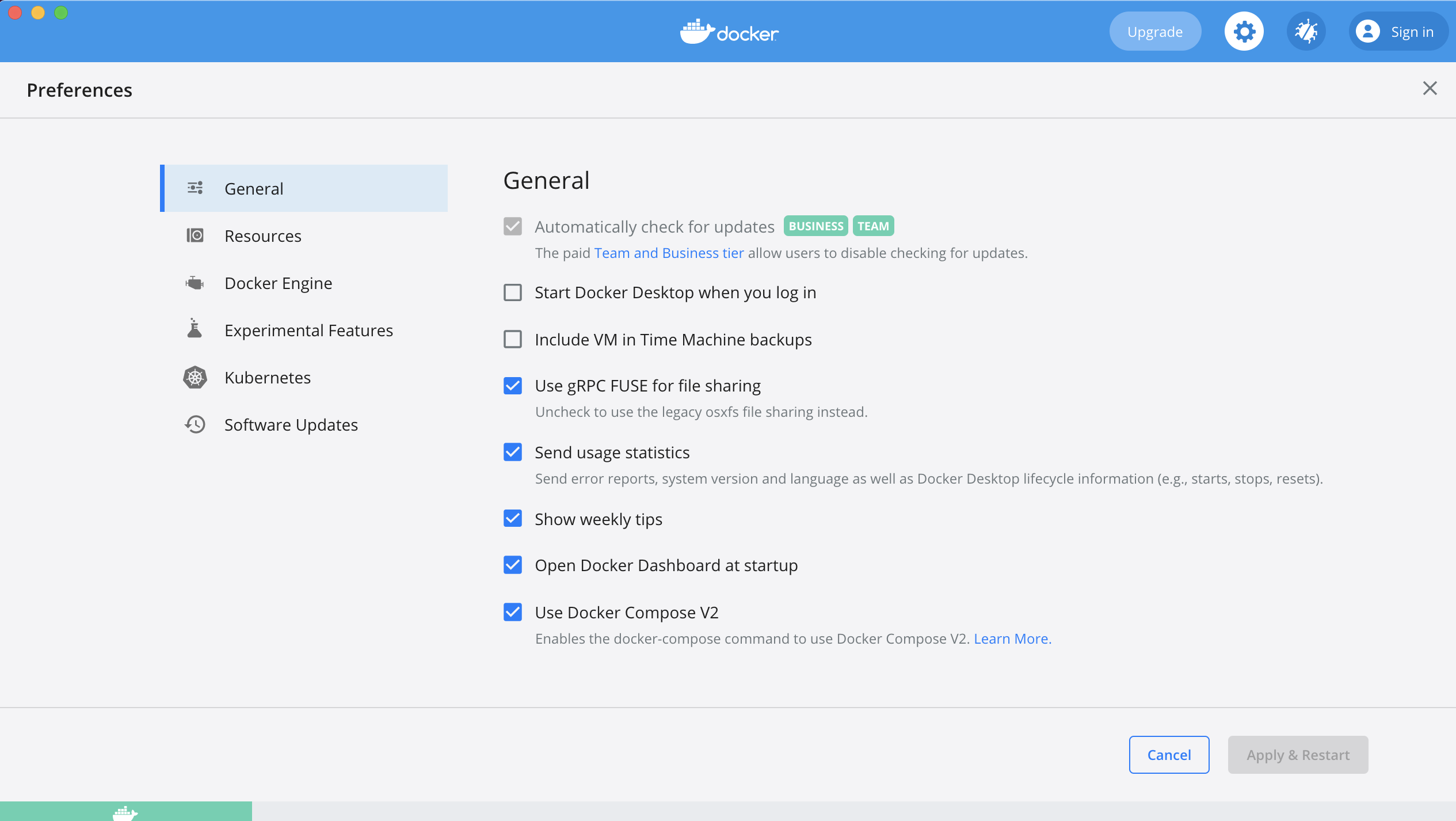

Docker Desktop 4.1.1 got released last week. This latest version support Kubernetes 1.21.5 that was made available recently by the Kubernetes...

Creating a resume is the stepping stone and the biggest challenge you face as a fresh graduate.Many of them prefer entering...

If you’re an IoT Edge developer and looking out to build and deploy the production-grade end-to-end AI robotics applications, then check...

When I saw the RoboMaster S1 for the first time, I was completely stoked. I was impressed by its sturdy look....

Did you know? Around 94% of AI Adopters are using or plan to use containers within 1 year time. Containers are...

Are you frustrated with how much time it takes to create, deploy and manage an application on Kubernetes? Wouldn’t it be...

Application developers look to Redis and RedisTimeSeries to work with real-time internet of things (IoT) sensor data. RedisTimeseries is a Redis...

Starting Docker Desktop 3.5.0, Docker introduced the Dev Environments feature for the first time. The Dev Environments feature is the foundation...

The RoboMaster S1 is an educational robot that provides users with an in-depth understanding of science, math, physics, programming, and more...

The NVIDIA Jetson Nano 2GB Developer Kit is the ideal platform for teaching, learning, and developing AI and robotics applications. It...

This June 19th, 2021, the Collabnix community is coming together with JFrog & Docker Bangalore Meetup Group to conduct a meetup...

Kubectl is a command-line interface for running commands against Kubernetes clusters. kubectl looks for a file named config in the $HOME/.kube directory. You can specify...

On a typical installation, the Docker daemon manages all the containers. The Docker daemon controls every aspect of the container lifecycle....

Last year, Dockercon attracted 78,000 registrants, 21,000 conversations across 193 countries. This year, it was an even much bigger event attracting...

Did you know? Dockercon 2021 was attended by 80,000 participants on the first day. It was an amazing experience hosting “Docker...

Kubernetes (commonly referred to as K8s) is an orchestration engine for container technologies such as Docker and rkt that is taking...

Are you still looking out for a solution that allows you to open multiple web browsers in Docker containers at the...

Rootless mode was introduced in Docker Engine v19.03 as an experimental feature for the first time. Rootless mode graduated from experimental...

RedisInsight is an intuitive and efficient GUI for Redis, allowing you to interact with your databases and manage your data—with built-in...

The BME680 is a digital 4-in-1 sensor with gas, humidity, pressure, and temperature measurement based on proven sensing principles. The state-of-the-art...

This is the complete guide starting from all the required installation to actual dockerizing and running of a node.js application. But...

OSCONF 2021 is just around the corner and the free registration is still open for all DevOps enthusiasts who want to...

According to a recent Gartner report, “By the end of 2024, 75% of organizations will shift from piloting to operationalizing artificial...

The Pinebook Pro is a Linux and *BSD ARM laptop from PINE64. It is built to be a compelling alternative to...

According to Gartner “By 2024, low-code application development will be responsible for more than 65% of application development activity.” Low code...

The NVIDIA Jetson Nano 2GB Developer Kit is the ideal platform for teaching, learning, and developing AI and robotics applications. It...

Are you seriously looking out for a tool that can save your AWS bills while being a FREE tier user? If...

Docker Desktop is the preferred choice for millions of developers building containerized applications. With the latest Docker Desktop Community 3.0.0 Release, a new...

If you’re a FOSS enthusiast and looking out for a powerful little ARM laptop, PineBook Pro is what you need. The...

Today at GPU Technology Conference(GTC) 2020, NVIDIA announced a new 2GB Nvidia Jetson Nano for the first time. Last year, during...

Starting with v4.2.1, NVIDIA JetPack includes a beta version of NVIDIA Container Runtime with Docker integration for the Jetson platform. This...

Register Here With 100+ sessions, 200+ speakers & tons of sponsors , Arm DevSummit is going to kick-off next month. It...

This article was originally published in the leading Open Source India magazine Ajeet Singh Raina’s journey as a community leader began...

With over 126 million monthly users, 200 million games sold & 40 million MAU, Minecraft still remains one of the biggest...

Irrespective of the immaturity of the container ecosystem and lack of best practices, the adoption of Kubernetes is massively growing for...

Amazon Elastic Kubernetes Service (a.k.a Amazon EKS) is a fully managed service that helps make it easier to run Kubernetes on...

“Individually We Specialise, Together We Transform” Slack has grown to be an excellent tool for communities- both large and small, open...

Jenkins works perfectly well as a stand-alone open source tool but with the shift to Cloud native and Kubernetes, it invites...

The rapid adoption of cloud-based solutions in the IT industry is acting as the key driver for the growth of the...

If you’re looking out for a $0 cloud-based, SaaS test automation development framework designed for your agile team, TestProject is the right...

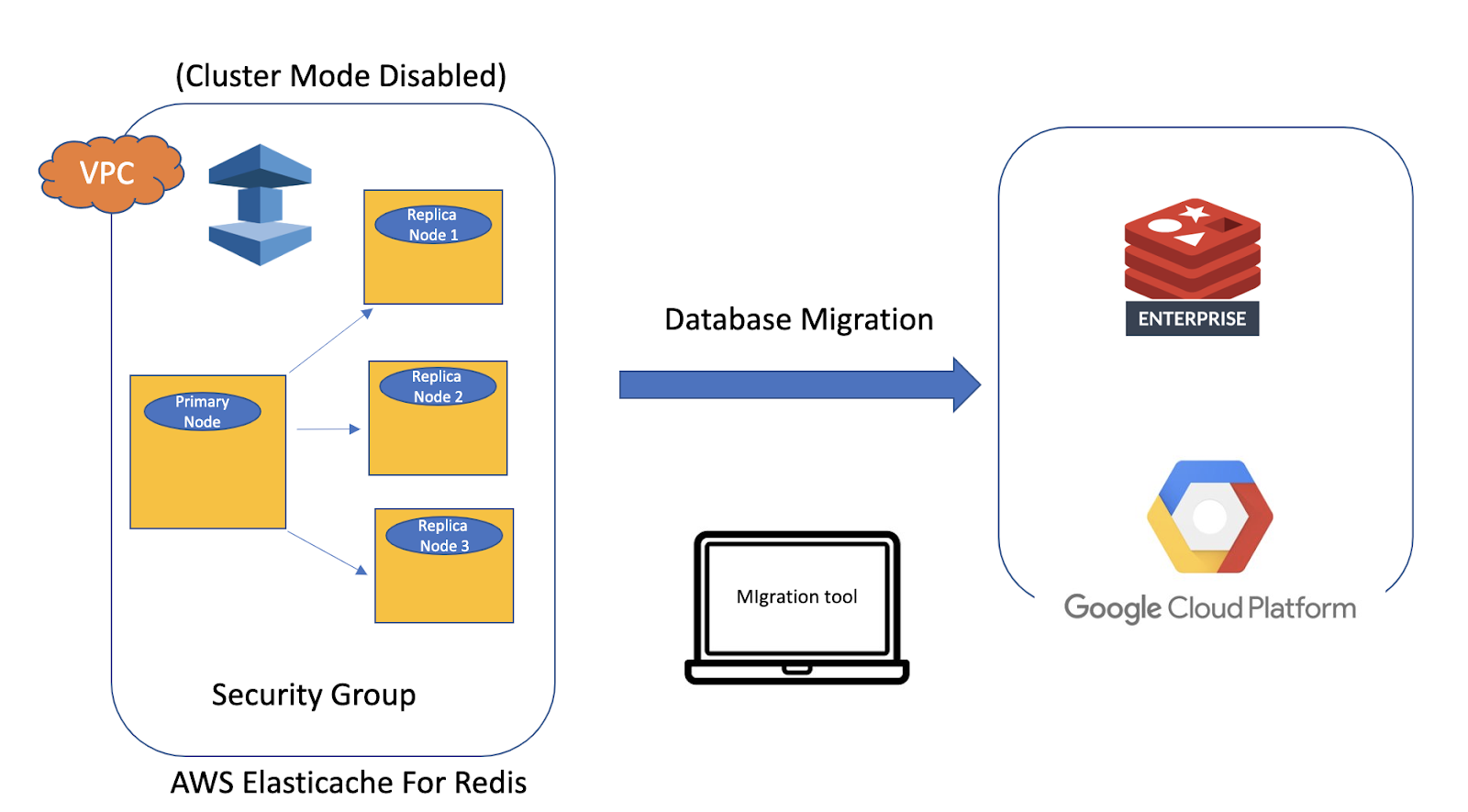

Are you looking out for a tool which can help you migrate Elasticache data to Redis Open Source or Redis Enterprise...

In my first post, I discussed how to build your first certified on-premises Kubernetes cluster using Docker Enterprise 3.0. In this...

Kubernetes provides a container-centric management environment. It orchestrates computing, networking, and storage infrastructure on behalf of user workloads. This provides much...

If you are looking out for a tool which can inspect your Redis data, monitor health, and perform runtime server configuration...

If you are serious about monitoring your cluster health with real-time alerts, analyzing your cluster configuration, rebalance as necessary, managing addition...

If you are looking out for the easiest way to create Redis Cluster on remote Cloud Platform like Google Cloud Platform...

Redis Enterprise Software (RS) offers Redis Cluster. RS Cluster is just a set of Redis nodes (OS with Redis installed). It...

Redis Enterprise Software (earlier known as “Redis Labs Enterprise Cluster”) is a robust in-memory but persistent on disk database . Redis...

DigitalOcean (sweetly called “DO”) is “Docker Developer’s Platform”. If you’re a developer & looking out for speedy way to spin up...

Yesterday I conducted Kubernetes workshop for almost 500+ audience at SAP Labs, Bengaluru (India) during Docker Bangalore Meetup #50. The workshop...

If you are looking out for the most effective real-time object detection algorithm which is open source and free to use,...

I conducted Pico workshop for University students (Vellore Institute of Technology, Vellore & the University of Petroleum & Energy Studies, Dehradun)...

If you are looking out for a small, affordable, low-powered system which comes by default with the power of modern AI...

Redis refers to REmote DIctionary Server. It is an open source, in-memory Data Structure Store, used as a database, a caching...

2019 was a transformational year for Collabnix. With major initiatives like DockerLabs, Pico project & Slack, the site attracted around millions...

Last Friday, I was invited by Sumo Logic to talk around Kubernetes Monitoring & Best Practices. Around 200+ attendees participated for...

The Grace Hopper Celebration of Women in Computing (GHC) is a series of conferences designed to bring the research and career...

If you are looking out for lightweight Kubernetes which is easy to install and perfect for Edge, IoT, CI and ARM,...

On the 3rd of October, I travelled to UPES Dehradun(around 1500 miles) for 1 day session on ” The Pico project”...

In Part 1 of this blog series, I demonstrated how to deploy a certified Kubernetes cluster on-premises using Docker Enterprise 3.0....

Did you know? In the latest Docker 19.03 Release, a new flag –gpus have been added for docker run which allows...

Docker is officially supported both on Raspberry Pi 3 and 4. Installing Docker is just a matter of single-liner command. All...

There is no excerpt because this is a protected post.

If you have ever conducted Docker on Raspberry Pi workshop during the Meetup event, you surely understand the pain in bringing...

Did you know? Over 800+ enterprise organizations use Docker Enterprise for everything from modernizing traditional applications to microservices and data science....

If you try to setup Kubernetes cluster on bare metal system, you will notice that Load-Balancer always remain in the “pending”...

Two week back, I travelled to Jaipur, around 1000+ miles from Bangalore for delivering one of Docker Session. I was invited...

Last Dockercon, dozens of new Docker CLI Plugin were introduced. All of these CLI plugins will be available in upcoming Docker...

I am thrilled and excited to start a new open source project called “Pico”. Pico is a beta project which is...

DockerHub is a service provided by Docker for finding and sharing container images with your team. It is the world’s largest...

2 week back, I wrote a blog post on how Developers can now build ARM containers on Docker Desktop using docker...

2 weeks back in Dockercon 2019 San Francisco, Docker & ARM demonstrated the integration of ARM capabilities into Docker Desktop Community...

Let’s talk about Docker in a GPU-Accelerated Data Center… Docker is the leading container platform which provides both hardware and software...

Docker CE 19.03.0 Beta 1 went public 2 week back. It was the first release which arrived with sysctl support for...

Last week Docker Community Edition 19.03.0 Beta 1 was announced and release notes went public here.Under this release, there were numerous...

To implement a microservice architecture and a multi-cloud strategy, Kubernetes today has become a key enabling technology. The bedrock of Kubernetes...

Docker’s Birthday Celebration is not just about cakes, food and party. It’s actually a global tradition that is near and dear to our heart...

If you are looking out for Desktop Enterprise software solution for creating & delivering production-ready containerized applications in a simplified &...

If you want to get started with Kubernetes on your Laptop running Windows 10, Docker Desktop for Windows CE is the...

Ansible is an open-source engine that automates configuration management, application deployment, and other devOps tasks. Ansible is simple, agentless IT automation...

Last week I purchased Raspberry PI Infrared IR Night Vision Surveillance Camera Module 500W Webcam. This webcam features 5MP with OmniVision 5647 sensor...

Docker Desktop for Windows 2.0.0.3 Release is available. This release comes with Docker Engine 18.09.2, Compose v1.23.2 & Kubernetes v1.10.11. One...

Docker Engine v18.09.1 went GA last month. It was made available for both the Community and Enterprise users. Docker Enterprise is...

Let’s talk about Docker inside the datacenter.. If you are a datacenter administrator and still scouring through a spreadsheet of “unallocated”...

On the 2nd day of Dockercon, Docker Inc. open sourced Compose on Kubernetes project. This project provides a simple way to define cloud...

Say Bye to Kompose ! Let’s begin with a problem statement – “The Kubernetes API is quite HUGE. More than 50...

A Docker Swarm consists of multiple Docker hosts which run in swarm mode and act as managers (to manage membership and...

Last week I attended Dockercon 2018 EU which took place at Centre de Convencions Internacional de Barcelona (CCIB) in Barcelona, Spain....

Are you looking out for Docker related Interview questions categorised into Beginners, Intermediate & Advanced Level users? If yes, then you...

Under the newer Docker Engine 18.09 release, a new feature called CE-EE Node Activate has been introduced. It allows a user...

At Dockercon 2018 this year, you can expect 2,500+ participates, 52 breakout sessions, 11 Community Theatres, 12 workshops, over 100+...

Who is DevOps engineer? DevOps engineers are a group of influential individuals who encapsulates depth of...

Let’s talk about Kubernetes Deployment Challenges… As monolithic systems become too large to deal with, many enterprises are drawn to...

Docker-app allows you to share your applications on Docker Hub directly. This tool not only makes Compose file shareable but provide...

Let’s talk about RBAC under Docker EE 2.0… Kubernetes RBAC(Role-based Access Control) security context is a fundamental part of...

Istio is completely an open source service mesh that layers transparently onto existing distributed applications. Istio v1.0 got announced last...

Nginx (pronounced “engine-x”) is an open source reverse proxy server for HTTP, HTTPS, SMTP, POP3, and IMAP protocols, as...

Are you new to Kubernetes? Want to build your career in Kubernetes? Then Welcome ! You are at the right place. This...

Let’s begin with Problem Statement ! DockerHub is a cloud-based registry service which allows you to link to code repositories,...

OpenUSM is a modern approach to Server Management, Insight Logs Analytics and Machine Learning solution integrated with monitoring & logging pipeline...

Scenario: Say, you have built Docker applications(legacy in nature like network traffic monitoring, system management etc.) which is expected to be directly...

If you’re a Developer and have been spending lot of time in developing apps recently, you already understand a whole new...

A New Docker Enterprise Engine 18.03.0-ee-1 has been released. This is a stand-alone engine release intended for EE Basic customers....

I still remember those days(back in 2006-07′) when I started my career as IT Consultant in Telecom R&D centre where I...

Did you know? There are more than 300,000 Docker Compose files on GitHub. Docker Compose is a tool for defining and...

Yet another amazzing Dockercon ! I attended Dockercon 2018 last week which happened in the most geographically blessed city...

Docker for Mac 18.05.0 CE Release went GA last month. With this release, you can now select your orchestrator directly...

In a recent survey of 191 Top Fortune 1000 executives, 69% of them believe that conference presents a wealth of...

Docker is a full development platform for creating containerized apps, and Docker for Mac is the most efficient way to start...

Docker for Mac 18.04.0 CE Edge Release went GA early last month. This was the first time Kubernetes version 1.9.6...

Is Function a new container? Why so buzz around Serverless computing like OpenFaas? One of the biggest tech trend of...

Its 2018 ! Let Containers Manage Your Datacenter.. Containers are changing the dynamics of modern data center. It is a growing...

The Only Kubernetes Solution for Multi-Linux, Multi-OS and Multi-Cloud Deployments is right here… Docker Enterprise Edition(EE) 2.0 Final GA release is...

“As you grow up, make sure you have more networking opportunities than chances, and more collaborative approach than just an acquaintance....

Docker 18.03.0 CE Release is now available under Docker for Mac Platform. Docker for Mac 18.03.0 CE is now shipped with...

Are you new to CI/CD? Continuous Integration (CI) is a development practice that requires developers to integrate code into a shared...

Say Bye to Kubectx ! I have been a great fan of kubectx and kubectl which has been a fast way...

Docker for Mac 18.01.0 CE is available for the general public. It holds experimental Kubernetes release running on Linux Kernel 4.9.75,...

Docker For Mac 17.12 GA is the first release which includes both the orchestrators – Docker Swarm & Kubernetes under the...

“LinuxKit is NOT designed with an intention to replace any of traditional OS like Alpine, Ubuntu, Red Hat etc. It...

Docker support for Kubernetes is now in private beta. As a docker captain, I was able to be a part of...

LinuxKit GITHUB repository has already crossed 1800 commits, 3600+ stars & been forked 420+ times since April 2017 when it was...

In my last blog post, I talked about how to get started with NVIDIA docker & interaction with NVIDIA GPU system. I...

Docker is the leading container platform which provides both hardware and software encapsulation by allowing multiple containers to run on the same...

Here comes the most awaited feature of 2017 – “Building Docker Swarm cluster which includes all Windows cluster, or a hybrid...

By default, Docker assigns IPv4 addresses to containers. Does Docker support IPv6 protocol too? If yes, how complicated is to get it...

LinuxKit GITHUB repository recently crossed 3000 stars, forked around 300+ times and added 60+ contributors. Just 5 months old project and...

Let’s talk about Dockerized Elastic Stack… Elastic Stack is an open source solution that reliably and securely take data from any...

Docker For Mac 17.06 CE edition is the first Docker version built entirely on the Moby Project. In case you’re new,...

“..Its Time to Talk about Bring Your Own Components (BYOC) now..” The Moby Project is gaining momentum day by day. If...

Today I spoke at Docker Bangalore Meetup which took place in IBM India Systems Development Lab(ISL) – an R&D Division located at...

Docker 17.06.0-ce-RC5 got announced 5 days back and is available for testing. It brings numerous new features & enablements under this new...